by Julian van der Kraats, Leiden University, the Netherlands.

A year after the Fürth Manifesto, the landscape has shifted. The question is whether our foundations have kept up.

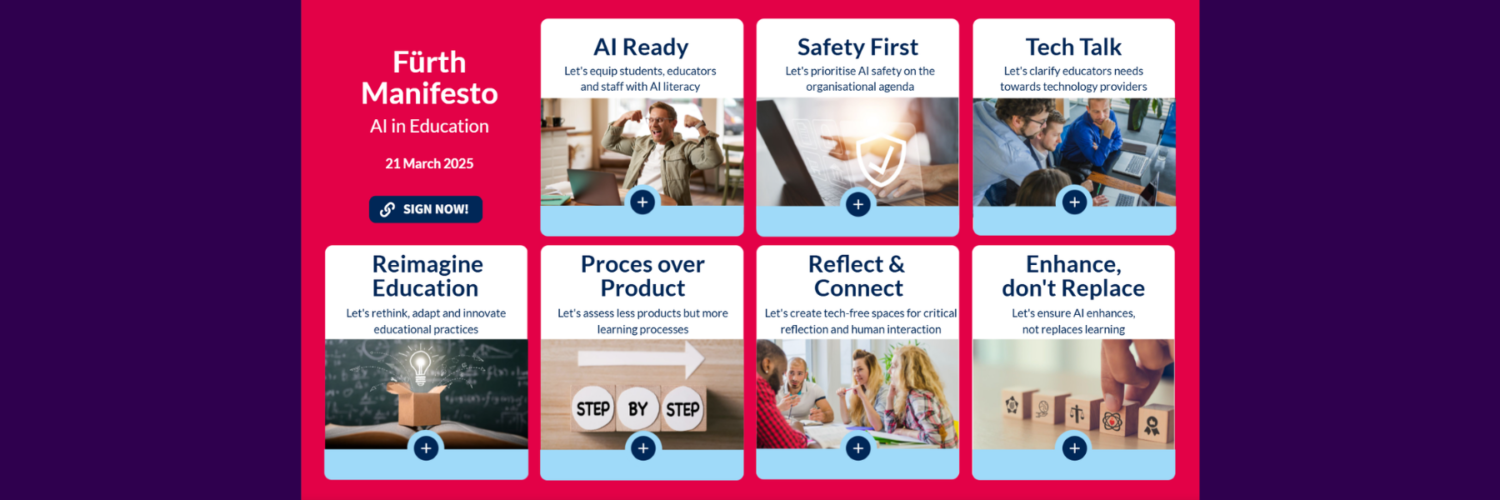

In March 2025, a group of educators, instructional designers and other educational professionals gathered in Fürth, Germany, for an intensive week of exchange on AI in higher education. Hosted by FAU Erlangen-Nürnberg under the banner of the Media & Learning Association, the week culminated in something unusual: a collectively written manifesto. Not a policy brief, but a statement of shared principles, signed by all participants.

The Fürth Manifesto on AI in Education outlines seven principles for the responsible and effective integration of AI in teaching and learning. A year on, those principles deserve a closer look.

What the Manifesto says

The Manifesto’s preface sets the tone: the core focus must remain on learning, which is inherently complex. AI’s affordances are promising but not guaranteed to help. Those responsible for education must design thoughtfully and support learners in shaping their own learning.

From that starting point, the seven principles address the ground that any institution integrating AI needs to cover. The first calls for AI literacy for everyone: students, educators, and staff alike should understand how AI works, recognise its implications, and learn to use it critically and creatively. The second highlights AI safety as an organisational priority, urging educators to advocate for sufficient resources and multidisciplinary approaches to address risks to fundamental rights, privacy, and cybersecurity. The third principle asks institutions to articulate a clear educational vision for AI use: accountable, actionable, accessible, ethical, and pedagogically grounded.

The fourth reframes AI not just as a disruption in itself, but more so as a mirror that reveals already outdated practices and existing gaps, and calls on educators to use this moment to rethink and innovate.

Principles five through seven address assessment, balance, and purpose. The fifth acknowledges the shift from evaluating products to evaluating processes, insisting that students retain full responsibility for their work. The sixth makes the case for tech-free spaces that foster critical reflection, creativity, and deeper human interaction. And the seventh ties it all together: as AI’s possibilities grow, education must clearly define and safeguard its core institutional purposes and values.

Where the pressure is building

A year is a long time in AI. When the Manifesto was written, generative AI was already familiar in education. Since then, multimodal and reasoning-capable models have matured, agentic systems are emerging, and AI is becoming less of an occasional tool and more of a normalised study companion, woven into daily workflows, as well as a companion and a coach for students.

This shift puts real pressure on several of the Manifesto’s principles. Take AI literacy. The Manifesto rightly identifies it as foundational, but is understanding how AI works still enough? When students are expected to delegate tasks to AI, orchestrate multi-step workflows, and critically evaluate AI outputs in complex professional contexts, we may need to think beyond literacy toward something more like AI fluency, or even co-creative competence.

Or consider assessment. The principle that assessment should shift toward evaluating learning processes rather than final products is sound, but when AI can assist at every stage of the process, the very notion of individual accountability becomes harder to pin down.

And then there is the broader question of purpose. As AI moves from tool to infrastructure, from something a student occasionally consults to something embedded in the learning environment itself, do we need new principles entirely? Or do the existing ones still hold, provided we interpret them with sufficient ambition?

The conversation continues

The value of the Fürth Manifesto was never that it settled these questions. It was that it created a shared vocabulary and a set of commitments that professionals across disciplines and institutions could rally around.

But foundations need stress-testing. The themes emerging across the community (the limits of AI literacy, the evolution of core competencies, the challenge of assessment, the social impact of AI companionship, and the shift from AI-as-tool to AI-as-infrastructure) all point to areas where the Manifesto’s principles need not replacement, but extension or addition.

What that looks like cannot be determined by any one person or institution. It requires the kind of multidisciplinary conversation that produced the Manifesto in the first place: educators alongside technologists, policy makers alongside pedagogues, philosophers alongside practitioners. Not quick consensus, but productive friction.

If that kind of conversation appeals to you, it is exactly what we will be pursuing at the Media & Learning Conference 2026 in a forum-style discussion with several of the Manifesto’s authors. Join us, and bring your strongest opinions.

Julian van der Kraats, Leiden University, the Netherlands