by James Rutherford, City, University of London, UK.

With a nod to the ALT Conference that was recently held at Warwick University, the subject of learning technology is a key topic under discussion across higher education, as universities and colleges prepare for the start of a new academic year. As we know, educational technology brings significant value to higher education in numerous ways, but there are still some misconceptions post- COVID-19 pandemic, even after the huge pivot to online learning and the growth of hybrid and multi-modal learning. This list is not exhaustive, but it arguably captures the main benefits:

- Increased student engagement and learning experiences

- Enhanced collaboration and communication

- Access to a wealth of resources and information

- Technology can be designed for greater accessibility, lower barriers and improve inclusivity

- Learning technology allows for flexibility, allowing remote participation

- Opportunities for tailored learning experiences and enhanced creativity

- Improved efficiency, cost-savings, and productivity, and allow more time for teaching

- Development of vital skills for life, digital literacy, and real-world capabilities in the workforce

- Technology support for research activities and dissemination

- Offer of professional development and lifelong learning for staff, alumni, and communities.

- During crises like the COVID-19 pandemic, educational technology has shown to be an invaluable tool for allowing a rapid adaptation to changing educational needs.

Common misconceptions

Yet there are still a number of repeated myths and obstinate views that we still hear, and not just from our more sceptical colleagues within higher education, this litany is not in any way intended to be academically comprehensive;

- While some technology can be expensive upfront, contrary to the perception that educational technology is costly and not a worthwhile investment, it can also provide long-term cost savings in terms of efficiency, productivity, and effectiveness. The costs of not using educational technology may be greater.

- Although technology has the potential to be engaging, it does not automatically guarantee engagement. It is important to carefully design and implement learning activities that meaningfully incorporate technology that is also appropriate.

- The idea that students can effectively multitask with technology is contradicted by research, which indicates that focusing on one task at a time is more conducive to learning.

- The myth of ‘digital natives’ suggests that younger generations are inherently skilled with technology, but in reality, many students still require guidance and instruction on how to use technology effectively for learning.

- Technology is a tool, not the solution for all educational challenges. It is essential to have a well-considered strategy for integrating appropriate technology into our learning spaces, using it to enhance the learning experience rather than as an alternative for traditional teaching methods.

- Technology is difficult to control and hampers learning. When properly planned and managed in learning spaces, learning outcomes have been noted to improve when technology can be seamlessly integrated into the learning process without causing disruptions or hindering learning.

- Technology can facilitate more effective communication and collaboration, however, it should not replace the valuable face-to-face interactions between lecturers and students, as well as students with their peers, hence the return to campus initiatives across HE since the COVID-19 pandemic.

What does the future hold for educational technology and what are the implications for higher education?

Well, the future does look interesting, with advancements in technology offering real educational opportunities to staff and students – but, taken with a pinch of reality salt. The models and concepts below while not extensive, are chosen for their inherent potential for higher education;

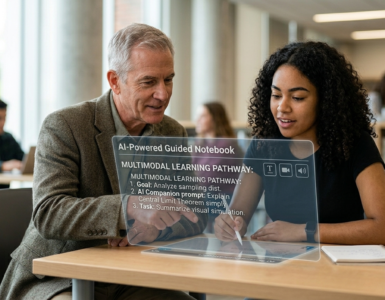

- AI-powered learning applications will no doubt become more prevalent in the future, but obviously it is a hot topic for higher education, generating enormous amounts of discussion. AI will probably offer tailored learning experiences for students, provide automated feedback, and propose new assessment tools. But these virtually endless opportunities will no doubt present many concerns over the risks to higher education.

- As Virtual Reality and Augmented Reality technology improves, the potential applications for education are boundless. These technologies may prove to offer students unique educational opportunities for immersion and interaction. Before being widely adopted in higher education, the real potential of AR and VR initially must first go through essential strategic and decision-making processes and then considered programme trials on campus.

- On campus technology such as the new ‘Flexible Displays’ will potentially allow for more interactive and engaging learning experiences. In comparison to conventional LCD’s, flexible screens have a number of advantages, including energy efficiency, durability, pliability, and portability. These could transform the display spaces and democratise where and what is displayed in class.

- Also, on campus we can expect such innovations as Smart classrooms, as a complement to the Smart Campus, which is not a new concept. The university’s buildings, infrastructure, and energy plants are all monitored as part of a “living lab” on a “smart campus.” Daily operations are improved by this data and the new connectivity, which also has the potential to speed up experimentation, innovation, and collaboration among a variety of academic fields. These may be equipped with information technology with machine learning, sensor networks, cloud computing, and accessible hardware, along with learning analytic tools that can track student progress and provide personalised feedback.

- Perhaps more likely in the USA and China to begin with, game-based learning is becoming more popular, as educators develop modules that show how it can engage students, encourage critical thinking, and improve information retention. One aspect would be to show how games can facilitate interdisciplinary learning by simulating complex real-world scenarios. This approach could help students develop a more holistic understanding of their subject matter.

- Hybrid learning and teaching, although not a future approach, it’s happening now and is developing, as I have written in the Educause Review. Hybrid learning modalities that combine online and in-person teaching have become more prevalent, although perhaps more considered post-pandemic, providing a wider student demographic with greater flexibility and access to educational experiences and programmes.

- Technology will continue to be developed that could support students with disabilities, unlocking more learning experiences previously unavailable to them. It is anticipated that assistive technology in higher education will become more interactive and inclusive, such as incorporating mainstream devices. Human-Computer Interaction, Extended Reality, the Internet of Things, which sounds positively futuristic, and even the NASA-designed concept of Digital Twins, these could well be incorporated into the curriculum of the future.

However, as this article states at the outset, it is crucial to carefully plan and carry out learning activities that appropriately and meaningfully incorporate technology. The realisation of educational technology’s potential demands a multidisciplinary approach involving educators, administrators, policymakers, students, parents, developers, service providers, and researchers.

In conclusion, it is surely reasonable to predict that by promoting equity and access, improving teaching, and learning quality, and preparing students for success in the digital age, educational technologies now and in the future have the potential to greatly enhance higher education. However, a recent paper looking at those trends gathered by UCISA, [Universities and Colleges Information Systems Association] in the technology-enhanced learning (TEL) survey data analyses (2018–2022), to achieve permanent change, it is imperative to establish supportive foundations, including incentives and professional development for academic staff, to allow for sustainable technological innovation and the adaptation of teaching methods.

Author

James Rutherford, City, University of London, UK