by Zac Woolfitt, Inholland University, The Netherlands.

AI and Higher Education – what should we do?

How are you managing the rapid development of AI in your educational context? Generative AI is forcing us to question what we should teach, what students should learn, and how we should assess. We are facing some major (and very interesting) challenges.

Higher Education institutions are working on creating clear guidelines, including my own organisation. AI, and how it is being used, is often moving faster than we can. Moorhouse, Yeo and Wan (2023) compiled ideas from the world’s top-ranking universities: ‘Despite the proven and potential benefits of AI in education, the disruptive nature of the general release of GAI technologies to common assessment practices has led to widespread calls for HEIs to develop comprehensive guidelines pertaining to their use’.

Arene, a Rectors’ Conference of Finnish Universities of Applied Sciences shared their guidelines in May 2023. They include ethics, responsibility, data protection, competence, guiding, enabling, sharing, training, monitoring and staying up to date. As they clearly state in the introduction: ‘These guidelines […] are not joint guidelines for universities of applied sciences. Universities of applied sciences must prepare their own guidelines independently.’ In their September 2023 report, UNESCO state ‘AI must not usurp human intelligence. Rather, it invites us to reconsider our established understandings of knowledge and human learning.’

AI use causes uncertainty and concern: ‘Examples include but are not limited to plagiarism deception, misuse or lack of learning, accountability, and privacy. There are both concerns and optimism about the use of ChatGPT in education, yet the most pressing need is to ensure student learning and academic integrity are not sacrificed.’.

Finding myself in the middle of these developments, I collected data from one of my groups of students.

10 ways students used AI

I teach tourism at a university of applied sciences in the Netherlands. In the 20-week course I ran from September 2023-January 2024, a group of 42 students (International and Dutch) worked on an individual assignment, a 6000-word research report in English. To develop a tourism brand for a small-scale destination.

In September, I met the students and explained my perspective: for teaching and learning to be meaningful, it must operate in a context of trust. I need to trust my students, and they need to trust me. I asked them to self-report honestly any use of AI as they worked on the assignment. I had no way of preventing them from using AI. The guidelines of my institution require any AI use to be openly stated, clearly sourced and correctly referenced at all times. Submitting AI generated work without indicating its origin is plagiarism and would be treated as academic fraud.

Throughout the 20 weeks, I reminded them to be transparent, to develop trust, and share their uses of AI. I openly shared my own ChatGPT prompts with them and the ways I was using it.

Data from 26 students

36 final reports were submitted. 26 students self-reported they had used AI during the delivery of their report. One additional student explicitly stated they had not used AI. Nine students did not specify their use either way.

I collected all their descriptions of AI use into one document. I then fed this into a prompt in Chat GPT and asked it to summarise and synthesize the text into 10 key points. Here is the summary.

Summary of student self-reported AI use (summarised by ChatGPT)

- Usage of AI Tools: Various AI tools were employed for different purposes, including grammar checking, paraphrasing, translation, summarization, and information retrieval.

- Grammar and Language Enhancement: Students consistently used AI, particularly Grammarly and ChatGPT, to improve grammar, rephrase sentences, and enhance the overall quality of their language.

- Translation Support: AI tools such as ChatGPT, DeepL, and Google Translate were used for translating texts and interviews from Dutch to English, aiding students in overcoming language challenges.

- Information Retrieval and Summarization: ChatGPT was utilized for summarizing documents, creating summaries of stakeholder interviews, and retrieving information efficiently from various sources.

- Slogan Generation: ChatGPT was specifically employed for generating ideas for slogans, demonstrating its creative application in branding assignments.

- Efficiency Improvement: AI played a role in improving efficiency, for instance, in translating interviews, refining introductions, and assisting with market segmentation explanations.

- Enhancement of Research Insights: AI, especially in the context of tourism branding, was used to analyze data, identify trends, and gain deep insights into psychographics, contributing to a more thoughtful approach in research.

- Transparent Acknowledgment: Several students explicitly acknowledged the use of AI for transparency, stating when AI tools like ChatGPT were utilized for reformulating sentences or generating ideas.

- Diverse AI Tools: Apart from ChatGPT, students employed other AI tools such as Otter.ai, QuillBot, and Perplexity for various purposes like interview transcription, paraphrasing, and source exploration.

- Overall Supportive Role: AI played a supportive role in multiple stages, including information gathering, text generation, language refinement, translation assistance, and analytical support, contributing to the development of comprehensive and well-crafted reports.

My perspective on assessing AI assisted reports

Although the ten points above are not a scientific analysis and are based on a small number of self-reported examples, they do give some insights. As I read through and assessed the reports, I reflected on the points above and summarised them into the points below:

- Asking students to be honest and open, and to self-report AI use, is an approach that worked for me. I trust the students to do this, and in most cases they returned this trust. (Maybe I am overly naive).

- Some students used the tools to enhance their learning and thought process; in general those reports were better. Other students used AI as a short-cut, to save time in the thinking and learning process; in general those reports were not as good.

- At the moment, it is still fairly easy for an experienced assessor to sense quickly whether text is written by a student or has gone through the ‘AI washing machine’. I saw certain vocabulary, terminology, and styles of writing that I’ve never seen students use before. This is an immediate red flag. When I know the student has openly admitted their use of these tools, it makes it much easier for me to read through the ‘AI adjusted text’, to get to the student’s intended meaning. I realise I may have missed text written by AI that was not reported, and this may get more difficult to spot.

- When a student overly relied on AI for writing and changing all their text, they lost their original ‘writing voice’ and their text became very generic to read. As an assessor I ‘lost interest’ in reading text that was clearly 100% adjusted by AI. I felt like I was taking the report and the effort of the student, ‘less seriously’.

- Where AI image programmes were used to generate tourism logos, the results were disappointing. They were generic and not appropriate, returning images that looked like wine labels, or illustrations for children’s books. Where these AI versions were then reworked by students, the final results were often improved. Those logos that were fully AI generated were not evaluated highly. This may change soon once AI image generation improves. Or by creating better prompts (e.g., Adding examples of top tourism logos into the prompt, and then generating AI logo examples based on those).

- From an assessor’s perspective, reading a report that has been extensively written by AI also undermines the sense of work the student has done. There was a clear distinction between those students who were specific and selective in their use of AI. These students often ended up getting a good grade, demonstrating their selective use of AI for specific goals. For the students who used AI in a blanket format, their own arguments, reasoning, writing voice and thought processes became ‘diluted’ and the reports were less engaging to read.

- I read through each student’s final ‘reflection’ at the end of each report. Overall, this showed that students found the assignment to be a lot of work but enjoyable. The types of tasks required to complete the assignment remain fairly AI proof (interviewing stakeholders, visiting the destination, designing a logo and brand). The use of AI did not reduce the effort needed to complete the whole assignment. But it did help in the areas indicated above. Students still had to put a lot of hard work in to complete the assignment. Their reflections showed they were mostly satisfied with their own effort while also using AI for support.

What might come next?

- At a later stage, a student might be able to upload all their previous writing assignments into their own private AI environment and ask AI to create their own unique ‘writing profile/style’. They could then instruct AI to match this style in future content it generates for them. Certain businesses are already doing this for their ‘house style’ of text generation. We expect AI to get better, and to become much less detectable.

- 100% AI proof assignments may not exist in the future. Only when students are writing a paper by hand in an exam room or having to present in person to assessors during an interview. It is important to ensure learning includes real world tasks. This can include conducting interviews, designing and synthesising ideas, showing iterations over a period of time with input from stakeholders. Focusing on formative feedback during the learning process, rather than one summative assessment at the end. Each educational institution needs continuously to clarify and refine their AI guidelines. Assignments should be restructured ‘to promote critical thinking while preventing academic misconduct under high time pressure’.

- As a researcher you may have to transcribe and translate interviews, re-listen to recordings, and type them up. This gets you closely in-touch with the primary data and can help you understand the research topic in more depth. However, several students used AI to transcribe and translate their primary data. It saves time, but they may miss the deep experience of data analysis. I wonder whether this will result in the loss of depth of the research experience when AI fills in for us.

- I shared all my reflections above with the students. It gave them insights to develop their own understanding of the pros and cons of using AI. When there was too much generic AI text, I graded them lower and explained why. I will continue to experiment and reflect on how my students are using these powerful tools. And ask them to reflect on how it is impacting their learning process.

How AI literate are you?

In this interesting moment in the history of education we are all playing catch-up to develop our AI literacy. It is our responsibility to stay informed of developments. To be aware of the biases and pitfalls of these tools and to experiment with them. What might come next?

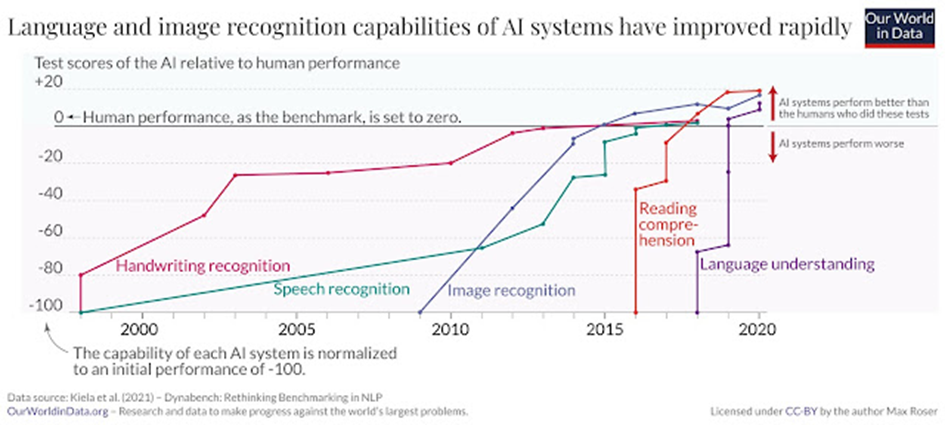

Language and image recognition of now outperforms humans (Roser/Kieler et al, 2021)

This graphic (from Max Roser) shows we should not underestimate the speed with which our human capabilities can be bettered by AI.

Which of our human capabilities will be the next one to be bettered by AI: Creativity, empathy, our humanness..?

I passionately believe that only by creating trust between educator and learner can we create meaningful and authentic learning experiences.

Comments, suggestions or corrections, please contact me directly at zac.woolfitt@inholland.nl.

AI was not used to rewrite any of this report (apart from synthesising the raw data into 10 points).

Author

Zac Woolfitt, lecturer and researcher, Inholland University in the Netherlands