by Zac Woolfitt, Inholland University, The Netherlands.

On 24th May, seven experts from higher education presented during the Media and Learning webinar AI in Higher Education: opportunities and threats. From Sweden there were speakers from Lund University and Lulea University of Technology, and from the Netherlands, the University of Amsterdam, and Inholland University of Applied Sciences. I was excited to be the moderator of this session and to be part of the discussion.

Artificial Intelligence is the hottest ticket in town since the cat has been let out of the bag, and things are developing quickly. It is difficult to grasp exactly what is happening and at what speed. AI is set to continue to develop exponentially over a year, 5 years, 10 years. AI absorbs vast amounts of data to improve itself while smoothing the rough edges by being ‘trained’ with input from humans. That combination results in a learning curve steeper than anything humans have in their comprehension.

Where would you place us on this curve?

Truthfulness is a side effect.

Jelle Zuidema, Julia Dawitz and Emma Wiersma from the University of Amsterdam presented first. As associate professor in Natural Language Processing, Explainable AI, and Cognitive Modelling Jelle was perfectly placed to introduce the subject.

Jelle explained the rapid growth of Large Language Models (LLMs) that lead to ChatGPT as a disruptive technology. We need to have a basic understanding of the technology if we are to estimate what it might lead to. 1) The four key innovations that make it possible: next word prediction and scale, the ‘transformer architecture,’ in-context learning and Reinforcement Learning from Human Feedback (RLHF). 2) Why guaranteeing truthfulness is so difficult and the ‘hallucination problem’. 3) Why reliable detection of the truth is so difficult. The tools are based on truly gigantic data sets of hundreds of billions of words. The answers are ‘optimised to be plausible and pleasing.’ By their very nature, the answers appear friendly and reasonable. Detecting falsehood is more difficult when a machine presents falsehood in a way that humans perceive as being truthful.

Our responsibility to train students and colleagues to use LLMs properly.

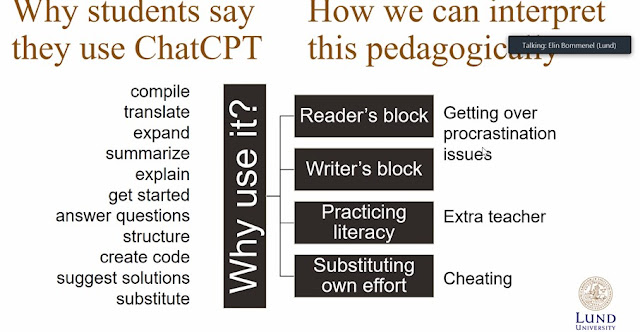

His colleagues Julia and Emma shared some practical examples of how to implement Generative AI in your teaching. They have developed a workshop, for lecturers on ways of using AI in their classes. They explained LLM applications can be used to aid lecturers to generate new questions, or to check student learning. And students can also use it to improve their learning to generate learning aids such as flash cards. As educational organisations, we are responsible for training students and staff in how to use these models correctly.

Is AI a blessing in disguise?

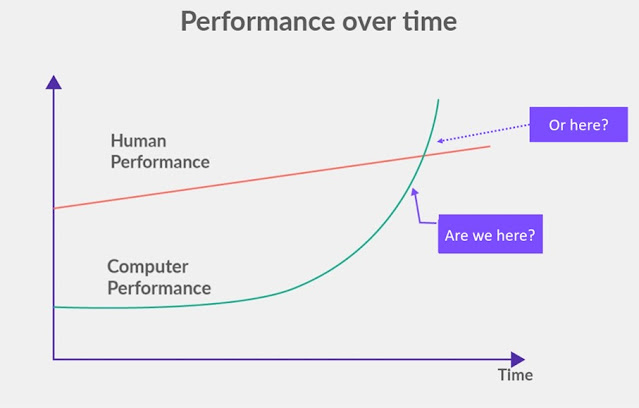

Elin Bommenel is an associate professor of Service Studies at Lund university and is running a project to prevent cheating at her university. She presented the historical context. For the last four hundred years, faculty have been going about their own business, and management have been trying to ‘herd cats’. However, after the recent arrival of ChatGPT, the 8,000 faculty suddenly turned to their vice chancellors for guidance. You realise that something is going on. There is no ‘tacit knowledge’ embedded in the walls of the university so this will require an innovative approach. Elin referred to the cognitive bias of the Dunning-Kruger effect (in which people wrongly overestimate their knowledge or ability in a specific area). And suggested that with LLMs, students are particularly vulnerable to overestimate their understanding of the tools, particularly since answers appear convincing and friendly, and use is so easy.

Finally, there is the question about how well-equipped higher education teachers are to help grown-ups learn. We have to teach students to ‘look out for answers that they can’t trust’. The only way to do this is to ensure that students have a university education, to develop the skills, critical thinking and awareness of when something is true or not. We have to develop academic literacy by focusing on 1) academic skills, 2) socialization and 3) developing the epistemological position.

How should we interpret why students use ChatGPT from a pedagogical perspective? (screenshot from E. Bommenel)

It is a student’s lack of understanding of the tools that leads to misuse of the tools as a ‘substitute for their own effort, e.g. cheating’. There is a greater than ever need to ensure each university has a pedagogical unit that can support faculty and students through this process.

Becoming better prompt engineers to ask questions about our own organisations

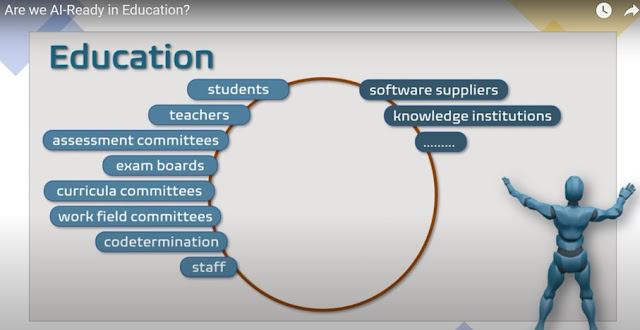

My colleague Peter Hollants from Inholland University presented his personal views on what is happening in this ‘paradigm shift’. He asked how we should manage the possible threats. How will the curriculum change and with it our way of teaching and assessing? How should teachers be facilitated by the school organisation with resources and instructions to use ChatGPT-like tools? And are we AI-ready? He focused on the importance of ‘prompt engineering’. Learning how to formulate the right questions. There are many different parties involved within each educational organization, and the challenge is how to facilitate the interactions. How will we govern these issues?

How can higher education facilitate multiple stakeholder interactions with AI? (screenshot from P. Hollants)

Tools are being developed to give us guidance on these questions ways to develop responsible practice in our interactions with Synthetic Media.

‘It takes some time, but AI always outperforms humans’

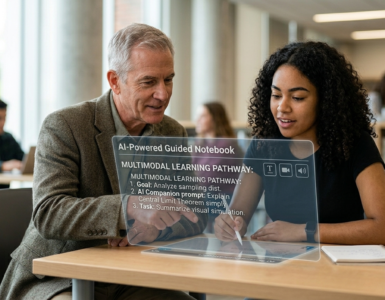

Peter Parnes (parnes.com) is a Professor in Pervasive and Mobile Computing at Luleå University of Technology. Peter looked to the future, to what might be happening next. ChatGPT broke all internet records, reaching 1 million active users in 4 days. And things have only moved quicker since then. Generative AI has the chance to transform education by personalizing it and creating adaptive learning experiences. At one point we will be able to augment models such as ChatGPT with our own data, or by supplementing them with new data browsed from the internet. There will be ‘real-time’ interactions with live information being interpreted and applied.

Another example he presented was ‘Be my eyes’ where AI interprets images into text for people with restricted vision. AI assistants will enhance individual learning through tailored support. And it has the opportunity to revolutionize teaching and learning. He is involved in a project with other Swedish Universities developing their own AI technologies.

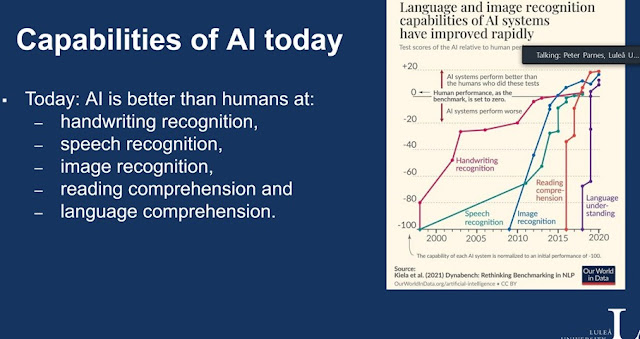

‘It takes some time, but AI always outperforms humans’ (screenshot from P. Parnes)

AI is currently not so good at understanding feelings or moods. The future holds improvements in emotional intelligence, personalization, increased memory size and mixing media. Khan academy has integrated ChatGPT into its interactive platform to create a Socratic set of interactions with students.

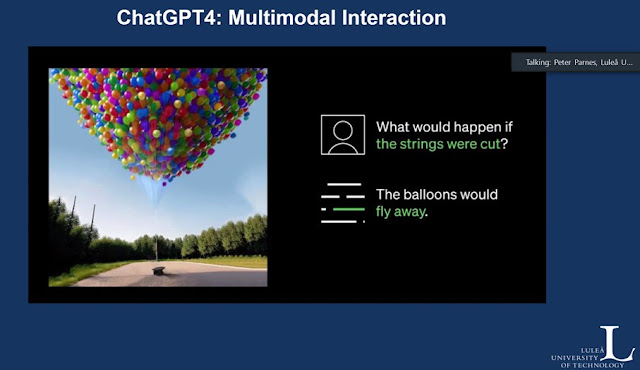

AI will improve on answering these types of questions (Screenshot from P. Parnes)

Peter finished on a positive note by saying that AI can free up the teacher for the deeper discussions which is where the real learning happens.

More questions than answers

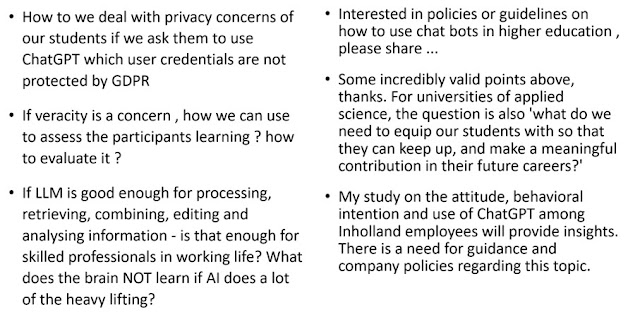

After the four presentations, there was an active discussion between the seven panelists and the webinar audience. We only touched on some of the ethical and moral issues of bias, access, safety and security. Some of the questions discussed are shown here.

The webinar audience asked more questions than we could answer

There are questions about what skills we need to teach and learn in an age of AI. This article explores the ‘capabilities’ that may be needed. A member of the audience mentioned Bloom LLM as a possible alternative for universities.

Reflection and next steps

These are exciting, and also worrying, times. There are very real reasons for concern, as addressed here in ‘The AI Dilemma’. The challenge is how we can benefit and control at the same time. The GDPR has had some recent success limiting some aspects of big tech (e.g., Google’s Bard not being available in Europe, or fines to Meta for transferring data to the U.S.). But the speed of change means we need to stay moving quickly.

What does it mean to be human?

The rise of AI shines an extra attention on what it is to be human, and our own humanness. A few years ago, I got to experience ‘being a hologram’, and to interact with other life-sized holograms. When I came back to reality and started to talk to real humans, my first reflection was ‘Wow, now I can see how ‘human’ humans are’. The UNESCO report on AI and ChatGPT draws attention to this fact too:

‘Our shared humanity has also become a key focal point within higher education, as faculty and leaders continue to wrestle with understanding and meeting the diverse needs of students and to find ways of cultivating institutional communities that support student well-being and belonging. (UNESCO)

And it may seem counterintuitive, but the more AI that is introduced, the more we need to put humans in control and charge of what is going on:

Seemingly polar ideas: the supplanting of human activity with powerful new technological capabilities, and the need for more humanity at the center of everything we do. (UNESCO)

Humans have a biological history rooted in the real world which determines our organic living existence. Working in partnership with AI is the only positive way forward.

The versatility of human intelligence (HI) compared to AI is related to its biological evolutionary history, adaptability, creativity, the ability of emotional intelligence, and the ability to understand complex abstract concepts. However, HI-AI cooperation can be beneficial if an accurate and reliable output of AI is ensured.

So what does it mean to teach, to assess and to learn in the age of AI? This is a rhetorical question that I continue to ask my colleagues and students. I don’t have the answers yet but it is going to be a very interesting discussion. The futurist Bryan Alexander will hold a talk later this year at OEB on ‘Instructors after AI’ and I wonder where the discussion will be a few months from now.

Thank you.

Thank you for reading this far. Comments, suggestions or corrections, please contact me at zac.woolfitt@inholland.nl. To stay up to date with the ongoing discussions you can join the Media and Learning special interest group on AI

Author

Zac Woolfitt, lecturer and researcher, Inholland University in the Netherlands